The Role of System Prompting in AI Tool Design: How Puretools Achieves Precision

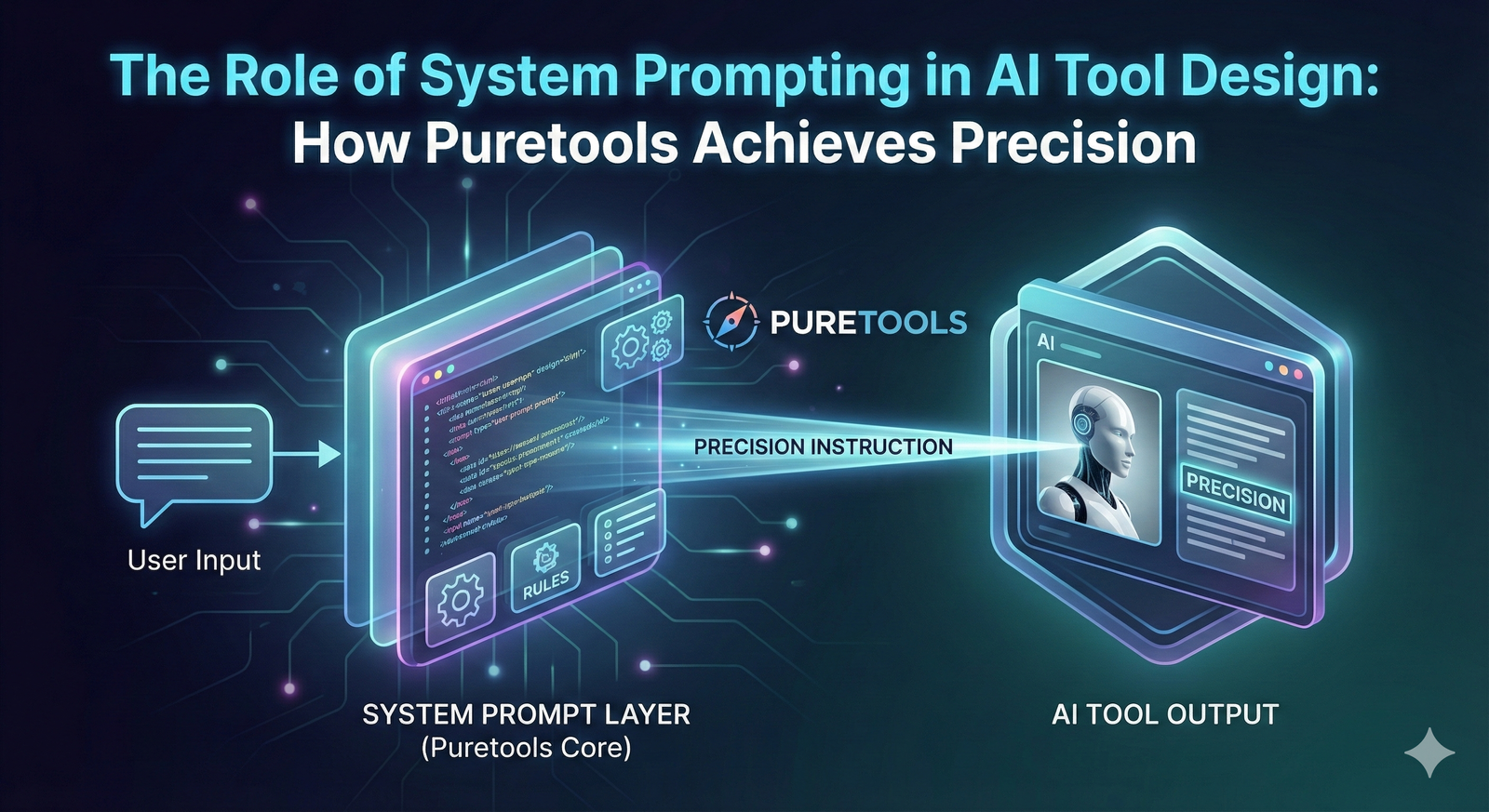

The true power of a Large Language Model (LLM) or any generative AI system is not found in its raw intelligence, but in its obedience. While the user's input—the user prompt—captures the creative request, it is the hidden, pre-configured System Prompt that governs the AI's behavior, persona, and output format. For developers building reliable, specialized AI applications, the System Prompt is the single most critical component of AI tool design.

This article delves into the strategic role of system prompting as the foundation for precision in generative AI. We will explore how this hidden layer of instruction transforms a general-purpose model into a specialized expert, and how Puretools leverages advanced system prompting techniques to deliver architect-grade AI prompt engineering with unparalleled reliability.

1. Defining the System Prompt: The AI's Operating System

In the architecture of modern LLMs (such as those powering Gemini, GPT, or Claude), the System Prompt is a set of instructions provided to the model before the user's input. It is the model's internal operating manual, defining its role, constraints, and objectives for the entire session.

1.1. The Three Pillars of System Prompting

Effective system prompting relies on three core pillars to steer the model's behavior away from its general-purpose nature and toward a specific, reliable function:

| Pillar | Description | Strategic Goal |

|---|---|---|

| Persona Injection | Assigning a specific role or identity to the AI (e.g., "You are a Senior Software Engineer," "You are a 20-year veteran SEO expert"). | Establishes Tone and Expertise: Ensures the output uses the correct vocabulary, tone, and knowledge base. |

| Constraint Setting | Defining strict rules, boundaries, and forbidden actions (e.g., "Do not apologize," "Output must be valid JSON," "Never discuss politics"). | Ensures Safety and Reliability: Prevents the model from deviating from the task or generating unwanted content. |

| Output Formatting | Specifying the exact structure of the response (e.g., "Return a Markdown table," "Use only a single sentence," "Wrap the response in XML tags"). | Enables Automation: Guarantees the output is machine-readable and ready for integration into downstream systems. |

Without a robust System Prompt, the AI operates in a state of maximum entropy, resulting in inconsistent, verbose, and often unusable outputs.

2. The Precision Problem in Generative AI

The non-deterministic nature of generative AI is its greatest strength and its greatest weakness. While creativity thrives on randomness, AI tool design demands precision.

2.1. The "Drift" Phenomenon

When a user asks a general-purpose model to generate a Midjourney prompt, the model often "drifts," including irrelevant artistic flourishes, failing to use the correct technical parameters, or simply returning a generic, low-quality suggestion. This drift occurs because the model is trying to be helpful and conversational, rather than precise and technical.

For a tool like Puretools, which promises precision in AI prompt engineering, this drift is unacceptable. The output must be an architect-grade blueprint, not a casual suggestion.

2.2. The Solution: System Prompting as Model Steering

System prompting is the mechanism used to counteract this drift. It acts as a constant gravitational pull, forcing the model to stay within the boundaries of the desired task.

Example: Instead of asking the model to "write a prompt," the System Prompt instructs the model: "You are an Elite Prompt Engineer. Your sole function is to translate the user's creative request into a structured, technically valid prompt for Midjourney V7.1. You must include the --style raw parameter and a specific camera lens in every output. Do not engage in conversation."

This instruction set is invisible to the user but is the secret sauce that ensures every output from the Puretools AI Prompt Generator is professional-grade.

3. How Puretools Masters System Prompting for Precision

Puretools is built on a multi-layered system prompting architecture that goes far beyond simple persona injection. It dynamically adjusts the System Prompt based on the user's selections, ensuring the underlying LLM (such as Gemini 2.5 Flash) is always operating with maximum focus and expertise.

3.1. Dynamic Persona Injection

Puretools doesn't just use one persona; it uses a dynamic persona that adapts to the user's goal.

| User Goal | Dynamic Persona Injected | Output Focus |

|---|---|---|

| Image Prompt | "Elite Prompt Engineer specializing in cinematic lighting and photographic realism." | Technical parameters, camera angles, lighting terms. |

| Video Prompt | "Hollywood Storyboard Artist and Cinematographer." | Camera movements, scene transitions, aspect ratios for film. |

| E-commerce Prompt | "Commercial Product Photographer and Studio Lighting Expert." | Clean backgrounds, soft shadows, product material accuracy. |

This dynamic approach ensures that the model's knowledge base is immediately filtered to the most relevant expertise, maximizing the quality of the output.

3.2. The Constraint Checklist: Enforcing Technical Standards

The most powerful technique employed by Puretools is the use of a Constraint Checklist within the System Prompt. This forces the LLM to perform a multi-step reasoning process before generating the final output.

The System Prompt includes an instruction like: "Before generating the final prompt, you must internally confirm the following checklist items have been met: 1. Is a specific lighting term used? 2. Is a camera/lens specification included? 3. Is the target model's required parameter (e.g., --style raw) present? 4. Is the output wrapped in the required JSON structure?"

This technique, which borrows from Chain-of-Thought prompting, forces the model to self-correct and validate its own output against a set of non-negotiable technical standards, leading to near-perfect adherence to the user's technical requirements.

3.3. Integrating Structured Data (JSON)

As discussed in our previous article, JSON prompts are the future of reliable AI. Puretools integrates this concept directly into its System Prompt architecture.

The System Prompt contains a final, non-negotiable instruction: "Your final output must be a valid JSON object conforming to the PromptSchemaV2.1." This ensures that the output is not only high-quality but is also instantly machine-readable, enabling workflow automation and seamless integration into external systems.

4. System Prompting as a Competitive Advantage in AI Tool Design

For any company building a specialized AI tool, the System Prompt is the intellectual property that differentiates a product from a simple wrapper around an API.

4.1. Reducing User Friction

A well-designed System Prompt dramatically lowers the barrier to entry for the user. The user no longer needs to be an AI prompt engineering expert; they only need to know what they want. The tool handles the complex, technical translation in the background. This is the core value proposition of Puretools: Precision without the Prompting Pain.

4.2. Future-Proofing Against Model Updates

As LLMs and image models are updated (e.g., Midjourney V6 to V7), their internal behaviors change. A tool built on a robust System Prompt architecture can be quickly updated by simply modifying the System Prompt's instructions and Constraint Checklist, without having to rewrite the entire application. This provides a crucial level of agility and future-proofing for the AI tool design.

4.3. The Ethical and Safety Imperative

System Prompts are also the primary defense against model misuse. By setting clear boundaries and forbidding the generation of harmful, biased, or illegal content, the System Prompt acts as the tool's ethical guardrail, ensuring responsible AI tool design and deployment.

Conclusion: The Architect of AI Precision

The era of casual, conversational AI is giving way to the era of precise, programmatic AI. The System Prompt is the architect's blueprint, the operating system that dictates the AI's behavior and ensures its reliability.

Puretools has embraced this philosophy, using dynamic persona injection, constraint checklists, and structured data formatting to transform a powerful, general-purpose LLM into a hyper-specialized AI prompt engineering tool. We believe that true innovation in AI lies not just in the models themselves, but in the intelligent design of the systems that control them.

By mastering the art of system prompting, Puretools delivers the precision, consistency, and quality that professional creators and developers demand.

Ready to experience the difference that architect-grade system prompting makes?

Explore the Technology Behind the Precision on the Puretools platform and elevate your AI prompt engineering today.

Explore Puretools FeaturesRelated Articles

Puretools vs. PromptPerfect

Compare two prompt engineering tools focused on consistency and optimization.

JSON Prompts are the Future

Discover why structured JSON is key to professional AI workflow automation.

Context Engineering

Learn about the evolution from traditional prompting to context engineering.