Context Engineering is the New Prompt Engineering: Designing the World Where AI Thinks

The initial wave of the generative AI revolution was defined by the prompt. Users, developers, and enthusiasts alike became obsessed with the art of crafting the perfect linguistic query—the "magic words" that could unlock a model's creative potential. This era of AI prompt engineering was exciting, but ultimately, it was a transitional phase.

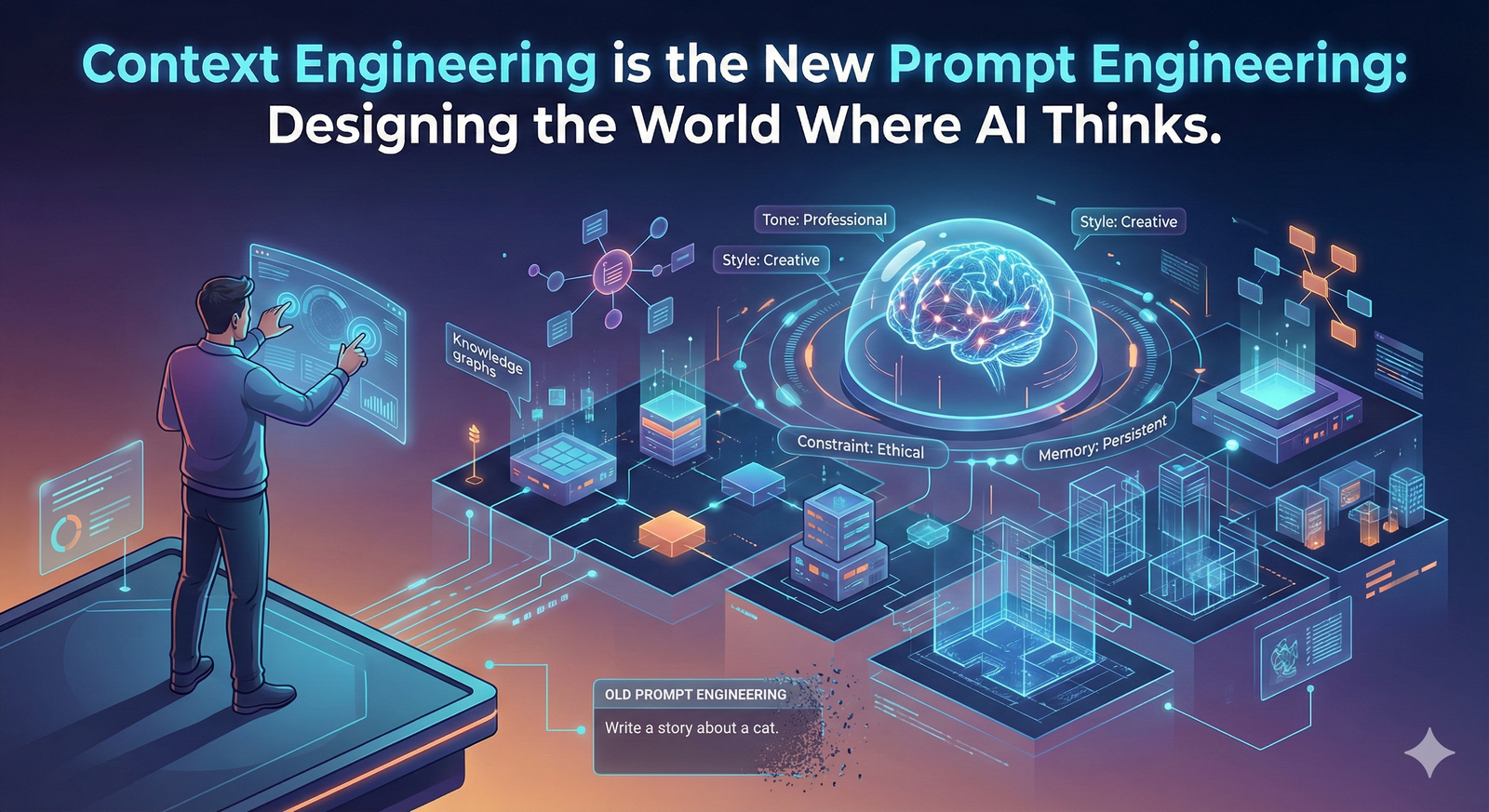

Today, as generative AI moves from a novelty to a mission-critical technology, the industry is undergoing a profound shift. The focus is moving away from the fleeting, one-off command and toward the persistent, structured environment that surrounds the model. Context Engineering is the new Prompt Engineering. It is no longer about clever wording; it is about designing environments where AI can think with depth, consistency, and purpose.

This comprehensive analysis will explore why the era of the simple prompt is ending, how Context Engineering provides the necessary foundation for enterprise AI adoption, and how tools like Puretools are built on this new paradigm to deliver precision and workflow automation.

1. The Fragility of the Linguistic Prompt

The initial fascination with prompt engineering stemmed from the idea that a user could "hack" the model's behavior through linguistic finesse. However, this approach proved fundamentally fragile and unscalable for professional use.

1.1. The Limits of Unstructured Text

Large Language Models (LLMs) are trained on vast, unstructured datasets, making them excellent at interpretation but poor at guaranteeing a specific, repeatable output. The user prompt, being a piece of unstructured text, is subject to the model's inherent non-determinism.

- Linguistic Ambiguity: Natural language is inherently ambiguous. A word can have multiple meanings, and the model's interpretation can change based on subtle shifts in its internal state or the surrounding conversation.

- Prompt Overload: As users attempted to achieve more complex results, prompts grew longer, leading to a phenomenon where the model struggled to prioritize elements, often forgetting instructions placed early in the text.

- The Chaos of Production: In a production environment, this fragility is disastrous. A prompt that works perfectly on Monday might fail on Tuesday due to a minor model update or a slight variation in the input. Companies quickly learned that models forget, drift, and misinterpret context unless they are constantly spoon-fed the necessary information.

The core realization is that prompt engineering was never meant to scale. It was a technique for exploration, not a foundation for workflow automation.

2. Context Engineering: The System-Level Discipline

Context Engineering is the system-level discipline of orchestrating the data, memory, and structure that fill the model's context window. If prompt engineering is what you do inside the context window, Context Engineering is how you decide what fills that window.

The goal is to construct a stable, resilient landscape where the model's reasoning remains anchored in verifiable, relevant information. This shift moves the focus from the user's immediate request to the underlying infrastructure that maintains consistency and reliability.

2.1. Retrieval-Augmented Generation (RAG): The Cornerstone

The most prominent example of Context Engineering in action is Retrieval-Augmented Generation (RAG). RAG systems solve the problem of model knowledge and memory by providing the model with just-in-time, external information.

Instead of relying on the model's internal, static training data (which is often outdated or generic), RAG orchestrates a process where the system first retrieves relevant documents, data points, or facts from a curated knowledge base (often stored in a vector database) and then injects that information into the prompt as context.

| Technique | Prompt Engineering | Context Engineering (RAG) |

|---|---|---|

| Goal | Get the best possible answer from the model's internal knowledge. | Get the most accurate, up-to-date, and verifiable answer. |

| Mechanism | Clever wording, linguistic cues. | Orchestration of external data, memory, and structure. |

| Consistency | Low; highly dependent on model's interpretation. | High; anchored in verifiable, external data. |

| Scalability | Low; requires constant manual refinement. | High; automated retrieval ensures relevant context is always present. |

Advanced RAG techniques, such as re-ranking retrieved documents, query expansion, and multi-hop reasoning, are the true frontier of AI system design.

3. The Architecture of Understanding: Layered Context

A well-engineered context is not a single block of text; it is a layered architecture that functions like urban planning for the model's cognition. This architecture ensures that the model can navigate complexity without getting lost in its own vast knowledge.

3.1. Layer 1: The System Prompt (Persistent Identity)

This is the foundational layer, defining the model's persona and constraints. As explored in our article on System Prompting, this layer is crucial for setting the tone and technical boundaries.

- Function: Defines who the AI is (e.g., "Elite Prompt Engineer") and how it must behave (e.g., "Output must be a valid JSON object").

- Persistence: Remains constant throughout the session, ensuring a stable, professional persona.

3.2. Layer 2: The Retrieved Context (Just-in-Time Knowledge)

This layer is dynamic and is populated by the RAG system. It provides the model with the specific, non-negotiable facts needed to complete the task.

- Function: Injects external data, such as a company's internal policy, a user's previous purchase history, or the technical specifications of a Midjourney V7.1 parameter.

- Precision: Ensures the model's response is grounded in reality, dramatically reducing the risk of hallucination.

3.3. Layer 3: The User Prompt (Creative Intent)

This is the final, smallest layer—the user's creative request. It is no longer the sole source of instruction but the final piece of the puzzle, guided by the two layers of context above it.

- Function: Conveys the user's immediate goal (e.g., "Generate a prompt for a photorealistic image of a cat").

- Efficiency: Because the context layers have already provided the necessary structure and knowledge, the user's prompt can be concise and focused purely on creative intent.

4. The Contextual Design Imperative: From Text to Data

The shift to Context Engineering is inextricably linked to the need for structured data for generative AI. The most reliable way to inject context is not through long paragraphs of text, but through machine-readable formats like JSON prompts.

4.1. The Role of JSON in Context Engineering

As demonstrated in our analysis of JSON Prompts, structured data provides the necessary programmatic contract for reliable AI.

- Context Injection: Instead of injecting a paragraph describing a product's features, a Context Engineering system injects a JSON object containing the product's SKU, price, and features. This structured data is easier for the model to process and less likely to be misinterpreted.

- Output Consistency: The final output of the AI is often required to be a JSON object, which is then used to update a database or feed another system. The entire workflow—from input context to output—is governed by structured data, ensuring end-to-end workflow automation.

4.2. Context Engineering in Generative Image Tools

In the realm of image generation, Context Engineering is the difference between a simple AI prompt generator and a professional AI prompt engineering tool.

- Model Steering: Tools like Puretools use Context Engineering to dynamically inject the correct technical context. When a user selects a target model (e.g., Nano Banana), the system injects the specific context required for that model's behavior, ensuring the prompt is optimized for its unique strengths (e.g., character consistency or text accuracy).

- Memory and Iteration: For complex projects, Context Engineering maintains a memory of previous generations, ensuring that subsequent prompts build logically on the last, allowing for a cohesive series of images rather than a collection of random outputs.

5. The Future of AI: Co-Intelligence and Contextual Design

The age of Context Engineering demands a new kind of design thinking. AI products must be treated as living ecosystems, not static tools.

5.1. The Contextual Feedback Loop

Contextual design introduces a dynamic feedback loop: the model's behavior informs the context, and the context reshapes the model's behavior. This continuous cycle drives adaptiveness and intelligence.

Example: If a user repeatedly rejects a prompt generated by the system, the Context Engineering layer records this negative feedback and injects a new constraint into the System Prompt: "Avoid the use of [rejected style] in future generations." The system learns and evolves with every interaction.

5.2. Context Engineering and Enterprise Scalability

For enterprise AI adoption, Context Engineering is the key to scalability. It allows organizations to:

- Standardize Knowledge: Ensure all AI applications draw from the same single source of truth (via RAG).

- Control Behavior: Maintain a consistent brand voice and compliance across all AI-generated content (via System Prompts).

- Automate Processes: Integrate AI outputs seamlessly into existing software (via JSON prompts).

Conclusion: The Age of Environments Has Begun

Prompt engineering taught us how to speak to machines. Context Engineering teaches us how to build the worlds they think within. The frontier of AI design now lies in memory, continuity, and adaptive structure. Every powerful system of the next decade will be built not on clever wording but on coherent context.

The age of the simple prompt is ending. The age of environments has begun. Those who learn to engineer context won't just get better outputs—they'll create models that truly understand. This is the foundation of AI precision and the future of workflow automation.

Puretools is built on this very principle. By translating your creative intent into a structured, context-rich data object, we ensure that the underlying generative model is always operating with the maximum consistency and reliability. We don't just write better prompts; we design the conditions for perfect results.

Ready to move beyond the prompt craze?

Explore the Technology Behind the Precision on the Puretools platform and embrace the future of Context Engineering.

Explore Puretools Features