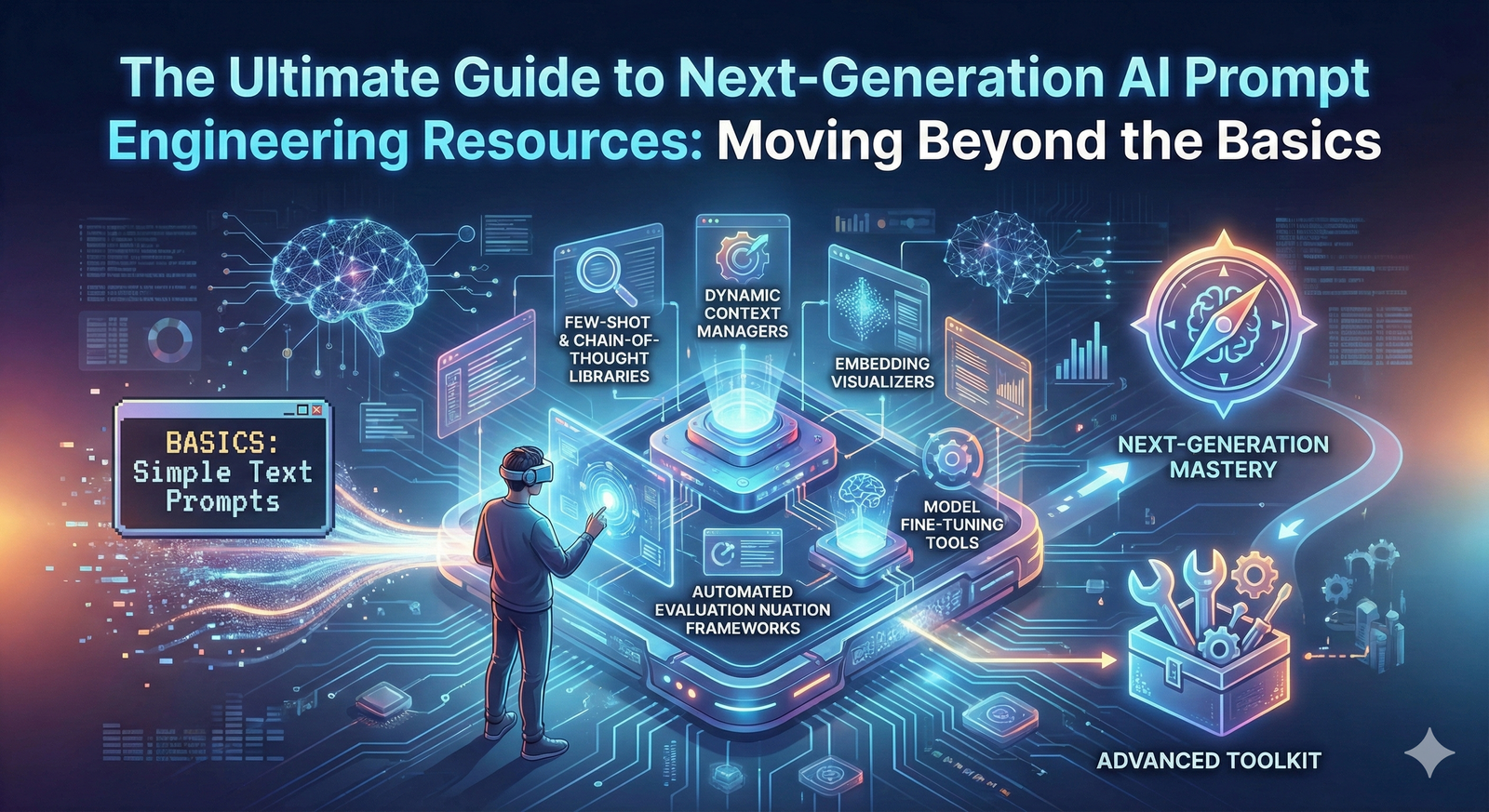

The Ultimate Guide to 3 Next-Generation AI Prompt Engineering Resources: Moving Beyond the Basics

The journey into generative AI often begins with the simple prompt. For many, AI prompt engineering is the first skill acquired, a fascinating dance of words to coax creativity from a machine. Yet, as the technology matures, the focus shifts from the art of the prompt to the science of the system. You are here because you understand that the future of reliable AI lies not in "magic words," but in Context Engineering—the discipline of designing the environment where AI can think with precision and consistency.

This comprehensive guide is designed for the advanced user, the developer, and the professional who has mastered the basics and is ready to explore the next generation of AI prompt engineering tools and resources. We will delve into three high-value resources that reflect the industry's pivot toward structured data for generative AI, workflow automation, and deep technical control.

1. The Context Engineering Imperative: Why Resources Must Evolve

The initial prompt craze was fueled by the illusion that a single, clever phrase could solve any problem. However, as use cases moved from one-off chats to enterprise AI adoption, the fragility of the unstructured prompt became evident. Models forget, drift, and misinterpret context, leading to inconsistent and unreliable outputs.

The new paradigm, Context Engineering, demands resources that focus on:

- Structure over Syntax: Prioritizing formats like JSON prompts and structured data to ensure machine-readable output.

- System Control: Utilizing system prompting and advanced frameworks to steer the model's behavior and maintain a consistent persona.

- Memory and Retrieval: Implementing Retrieval-Augmented Generation (RAG) to ground the model in external, verifiable knowledge.

The following resources represent the cutting edge of this shift, offering the technical depth required to build truly resilient AI applications.

2. Resource 1: The Structured Prompting Framework Handbook

The era of the simple text box is over. The most significant resource for the modern AI prompt engineering professional is the comprehensive guide to structured data for generative AI. This resource moves beyond basic frameworks like AUTOMAT or CO-STAR and focuses on the programmatic construction of the prompt.

2.1. The Shift to Programmatic Prompting

This handbook serves as the definitive guide to creating prompts that are inherently machine-readable. It emphasizes the use of JSON prompts and other structured formats to eliminate the ambiguity of natural language.

Key Focus: The resource provides detailed templates and best practices for defining the input and output of an LLM using a schema. This is crucial for workflow automation, where the AI's output must be instantly consumable by the next step in a software pipeline.

Advanced Techniques Covered:

- Schema Definition: How to use Pydantic or similar libraries to define the exact structure of the desired output.

- Model Steering: Techniques for using the system prompting area to instruct the model to adhere strictly to the provided schema.

- Error Handling: Strategies for validating the AI's JSON output and implementing self-correction loops.

2.2. Relevance to Puretools

This resource directly validates the core philosophy of Puretools. Our AI prompt generator is built on the principle of translating creative intent into a structured data object. The handbook provides the theoretical and technical justification for why our Structured JSON Outputs feature is essential for achieving high consistency and reliability in professional settings.

3. Resource 2: The Advanced RAG and Context Management Toolkit

The largest challenge in enterprise AI adoption is ensuring the model uses the correct, up-to-date, and proprietary information. This is the domain of Retrieval-Augmented Generation (RAG), and the next-generation resources focus on advanced RAG techniques that go far beyond simple vector search.

3.1. Beyond Basic Retrieval

This toolkit is designed for the AI system design engineer, focusing on how to manage the context window effectively. It acknowledges that simply retrieving documents is not enough; the retrieved information must be optimized and prioritized before being injected into the prompt.

Key Focus: Implementing advanced RAG techniques to improve the quality of the context injected into the model.

Advanced Techniques Covered:

- Query Transformation: Using an LLM to rewrite the user's query to better match the content in the vector database.

- Re-ranking: Employing secondary models (re-rankers) to prioritize the most relevant chunks of information, ensuring the most critical context is not lost in the limited context window.

- Multi-Hop Reasoning: Structuring the RAG process to perform multiple retrieval steps to answer complex questions that require synthesizing information from different sources.

3.2. Relevance to Puretools

This resource highlights the necessity of a robust Context Engineering layer. While Puretools focuses on the output prompt, the principles of RAG are essential for any tool that needs to maintain long-term memory or draw on external knowledge. For instance, a future Puretools feature could use RAG to retrieve a user's past Midjourney style preferences, injecting that context into the prompt to ensure consistency across all new generations.

4. Resource 3: LLM Prompt Compression and Latency Optimization Guide

As the context window of models grows, so does the cost and latency associated with processing long prompts. The third essential resource focuses on the technical optimization of the prompt itself, a critical step for workflow automation and cost-efficiency.

4.1. Making Every Token Count

This guide, often based on research from major labs like Microsoft (as seen with LLMLingua), provides practical methods for prompt compression. The goal is to reduce the number of tokens sent to the final, expensive LLM without losing the essential meaning or technical instructions.

Key Focus: Using smaller, cheaper language models to pre-process and compress the prompt, achieving significant cost savings and speed improvements.

Advanced Techniques Covered:

- Token Filtering: Identifying and removing non-essential tokens (stop words, filler phrases) that do not contribute to the model's understanding.

- Knowledge Distillation: Using a smaller model to summarize the long context into a concise, high-density summary before feeding it to the main model.

- Controllable Compression: Ensuring that critical technical elements, such as JSON prompts or system prompting instructions, are never compressed or removed.

4.2. Relevance to Puretools

This resource is vital for maintaining the speed and reliability of a professional AI prompt generator. By integrating prompt compression techniques, Puretools can ensure that even the most complex, multi-layered prompts (which include all the necessary structured data and system prompting instructions) are delivered to the final model in the most cost-effective and low-latency format possible. This commitment to efficiency is what separates a professional tool from a hobbyist application.

5. The Synthesis: Context Engineering as the Foundation for Precision

The three resources—Structured Prompting, Advanced RAG, and Prompt Compression—all point to the same conclusion: the future of AI prompt engineering is systemic. It is about building a robust, multi-layered system that manages the entire lifecycle of the prompt, from initial creative intent to final machine execution.

| Old Paradigm (Prompt Engineering) | New Paradigm (Context Engineering) |

|---|---|

| Focuses on the User Prompt (The Request). | Focuses on the System Prompt and RAG (The Environment). |

| Goal is Creative Output (Artistic Flair). | Goal is Consistent Output (Technical Reliability). |

| Relies on Linguistic Finesse (Clever Wording). | Relies on Structured Data (JSON Prompts). |

| Leads to Fragility and Drift. | Leads to Precision and Workflow Automation. |

Puretools is the embodiment of this new paradigm. Our tool is not a simple prompt optimizer; it is a Context Engineering platform that translates the user's creative request into a structured, optimized, and model-specific blueprint. By leveraging the principles found in these next-generation resources, Puretools ensures that the underlying generative model is always operating under the optimal conditions for precision and consistency.

Conclusion: The New Frontier of AI Mastery

The journey from simple text prompts to complex Context Engineering is the defining evolution of the generative AI industry. For those who seek to harness AI for professional, scalable, and reliable applications, mastering these next-generation resources is essential.

The age of the simple prompt is over. The age of environments has begun. By embracing structured data for generative AI, advanced RAG, and intelligent prompt compression, you move beyond the limitations of linguistic guesswork and step into the realm of programmatic AI system design.

Ready to move your prompting game to the next level?

Explore the Structured JSON Outputs and Model-Specific Syntax Engines on the Puretools platform today. We provide the AI prompt engineering tool built for the future of Context Engineering.

Explore Puretools Features